Introduction

Around the beginning of July 2021, our security mitigation team discovered a 0day amplification vector from Ruckus that had not yet been publicly identified.

Now, we must make mention at the beginning of this blog that a number of days after our initial discovery of the 0day vector, Ruckus released a security bulletin outlining the SmartZone Reflective Amplification Attack Vulnerability.

As our 30 day self-imposed embargo has well and truly passed, we have decided to publish a blog outlining how our security team discovered this vector and the impact and implications this recent DDoS vector will have.

One of the many processes our security mitigation team conducts is daily attack reviews across our global network. It was during this review that one of our engineers discovered something that was out of the ordinary and required additional investigation.

It was discovered that amongst a mixed amplification attack was a source port our team had never categorised before. This unusual source port was delivering 60% of an attack that involved other large amplification vectors.

What is an amplification attack

Just a quick refresher - for those that know what a mixed amplification attack is you can skip through this part!

Basically it's exactly as it might sound, there are services out there like DNS servers, such as Quad9 (read more about our global sponsorship assisting them) that turn domain names like facebook.com into an IP address like 192.168.1.1. Most of them are vital services and without them the internet wouldn't work as it does today.

But due to mistakes in their implementations and being incorrectly configured - these DNS servers can be abused for attacks. The below is a perfect example of this.

Sending a small query, let’s say 50 bytes to a DNS resolver, and spoofing the destination IP in your query to your victims IP. You can see them receive up to 4000 bytes per spoofed request. There's thousands of DNS resolves that can be abused in the wild for these attacks, imagine this scaled out in the order of thousands of resolvers and fake queries a second - the result is devastating to whoever receives it.

To make matters worse, the amplification server is used effectively as a middleman in this attack, making it's practically impossible to trace it back to whoever is launching the attack. If that is not bad enough, due to the nature of these services usually being vital, you can't just simply outright block them, especially in the case of DNS servers.

Lastly, ‘mixed’ in the context of this blog just means, multiple amplification vectors (e.g. DNS, NTP, MemCached) are used in a single attack to increase effectiveness of the attack.

Deep dive on the vector

Now, let’s have a deeper look into this 0day amplification vector.

While our mitigation systems already effectively blocked the attacks using this new vector, it was still imperative that we categorised it correctly and wrote even stricter rules. This allowed us to ensure it was completely blocked and show up correctly for customers in their reports and live attack overview portal.

As part of trying to categorise the vector we began looking for any service that ran on port 9001, but nothing in RFCs or unofficial assignments really lined up with the response we captured or our ethical scanning of the IPs that sent the attack traffic.

We decided to connect to the port and a few other ports on the IPs in question, upon connecting via SSH we were greeted by a big banner that said 'SmartZone 100' which lined up with Ruckus network controllers. This sequence of events held true for almost every IP we checked.

With this new information, we dubiously categorised the attack as a 'Ruckus SmartZone' amplification attack. At the time we searched around and nobody else had made the discovery so we had no double confirmation at this point in time.

A few days later, a member of our security team followed up and found a post that's since been hidden on the Ruckus forum from another engineer reporting the issue to Ruckus. In the post one of the engineers from Ruckus said "we use port 9001 for Elastic Search DB update and also sync with member node in the vSZ/SZ Cluster.'' This was the double confirmation we needed and suggested our security team had correctly identified the vector.

This subsequently meant Ruckus was aware of the issue and was likely already preparing a patch so we didn't need to contact them about it, we would have posted on the forum but as mentioned the post was hidden.

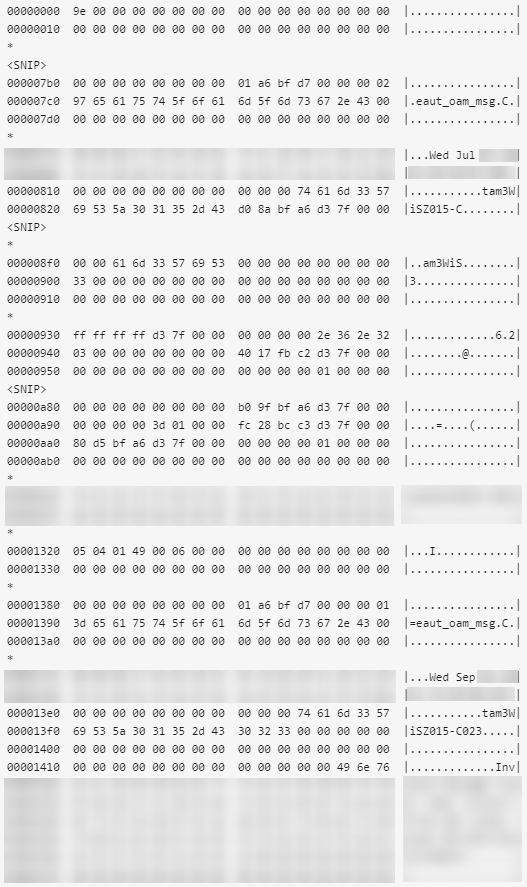

After identifying the vector, our security team went over the attack traffic (mostly the payloads) to find some commonalities to block. Luckily the destination port is always the same, which is true for all amplification vectors, and the payload also had a number of commonalities.

Commonalities were found and a specific rule was written and pushed globally into detection mode only and left for 24 hours.

Our team painstakingly reviewed everything that was detected in the 24 hours that passed - ensuring the rule wasn't too broad and catching legitimate traffic. Luckily the rule we had written wasn't too broad, so we decided to activate it globally to be triggered when needed.

Identifying the payload

Now that we had identified the vector and activated it across our global network, the only thing missing was figuring out what needed to be sent to trigger this particular attack. Once we have that we can figure out a rough amplification factor/ratio.

We checked our honey pots for any recent uptick in odd payloads sent to port 9001, but there wasn't anything that particularly stood out. Our security team tried a few things but eventually settled on a small malformed payload that triggers an error response.

With roughly a less than 30 byte request eliciting a 5,000-8,000 byte response it was quite a large amplification.

We're being deliberately vague here for a few reasons:

- This attack isn't saturated, meaning not many threat actors are aware of it, so it can generate large attacks consistently

- These are network controller devices, likely high performance and might even be close to network edges on large ports away from any rate limits, again resulting in large attacks with just a handful of vulnerable nodes

- It takes a long time to patch devices, for example NTP amplification vectors have been known about for nearly a decade and they are still used to launch devastating attacks daily

How to protect your business

DDoS attacks continue to rise with new vectors being identified more and more frequently. The categorisation of a 0day vector by our security team back in July 2021 meant we were able to activate the necessary rules across our global network, ensuring all of our customers’ were protected against the specific vector.

This Ruckus vector, although the initial payload was quite small, the end result was quite a large amplification attack. It highlighted the continued increase in the sophistication of DDoS attacks and more often than not the attack vectors that cause the most damage to the receiver are often small and understated on their own but can infiltrate a network and cause severe operational or financial implications.

By having our DDoS protection inline with your network, it can mitigate an attack in under 1 second. With advanced attack detection and rule sets with real time packet inspection, we ensure your network is protected inline. Our security team is constantly reviewing attacks 24/7 in real-time.

Our DDoS protection takes a matter of minutes to turn up and seamlessly integrates within your network. Our global network edge ensures threats are surgically removed at the edge before reaching your services.

Global Secure Layer DDoS Protection Solution

Author: GSL Security Team

If you would like to talk to our team about DDoS mitigation services, please don't hesitate to get in touch.